incrementalLearner

Description

IncrementalMdl = incrementalLearner(Mdl)IncrementalMdl for anomaly detection, initialized using the

parameters provided in the one-class SVM model Mdl. Because its

property values reflect the knowledge gained from Mdl,

IncrementalMdl can detect anomalies given new observations, and it is

warm, meaning that the incremental fit function can

return scores and detect anomalies.

IncrementalMdl = incrementalLearner(Mdl,Name=Value)IncrementalMdl is

prepared for incremental learning before fit updates the

score threshold for anomaly detection. For example,

Solver="sgd",EstimationPeriod=500 specifies to use the stochastic

gradient descent solver, and to process 500 observations to estimate model hyperparameters

prior to training.

Examples

Train a one-class SVM model by using ocsvm, convert it to an incremental learner model, fit the incremental model to streaming data, and detect anomalies. Transfer training options from traditional to incremental learning.

Load Data

Load the 1994 census data stored in census1994.mat. The data set consists of demographic data from the US Census Bureau.

load census1994.matThe fit function of incrementalOneClassSVM does not support categorical predictors and does not use observations with missing values. Remove missing values in the data to reduce memory consumption and speed up training.

adultdata = rmmissing(adultdata); adulttest = rmmissing(adulttest);

Remove the categorical predictors from the data.

Xtrain = removevars(adultdata,["workClass","education","marital_status", ... "occupation","relationship","race","sex","native_country","salary"]); Xstream = removevars(adulttest,["workClass","education","marital_status", ... "occupation","relationship","race","sex","native_country","salary"]);

Train One-Class SVM Model

Fit a one-class SVM model to the training data. Specify a random stream for reproducibility, and an anomaly contamination fraction of 0.001. Set KernelScale to "auto" so that the software selects an appropriate kernel scale parameter using a heuristic procedure.

rng(0,"twister"); % For reproducibility TTMdl = ocsvm(Xtrain, KernelScale="auto",ContaminationFraction=0.001, ... RandomStream=RandStream("mlfg6331_64"))

TTMdl =

OneClassSVM

CategoricalPredictors: []

ContaminationFraction: 1.0000e-03

ScoreThreshold: -0.0678

PredictorNames: {'age' 'fnlwgt' 'education_num' 'capital_gain' 'capital_loss' 'hours_per_week'}

KernelScale: 9.3699e+04

Lambda: 0.1632

Properties, Methods

TTMdl is a OneClassSVM model object representing a traditionally trained one-class SVM model.

Convert Trained Model

Convert the traditionally trained one-class SVM model to a one-class SVM model for incremental learning.

IncrementalMdl = incrementalLearner(TTMdl);

IncrementalMdl is an incrementalOneClassSVM model object that is ready for incremental learning and anomaly detection.

Fit Incremental Model and Detect Anomalies

Perform incremental learning on the Xstream data by using the fit function. To simulate a data stream, fit the model in chunks of 100 observations at a time. At each iteration:

Process 100 observations.

Overwrite the previous incremental model with a new one fitted to the incoming observations.

Store

medianscore, the median score value of the data chunk, to see how it evolves during incremental learning.Store

threshold, the score threshold value for anomalies, to see how it evolves during incremental learning.Store

numAnom, the number of detected anomalies in the chunk, to see how it evolves during incremental learning.

n = numel(Xstream(:,1)); numObsPerChunk = 100; nchunk = floor(n/numObsPerChunk); medianscore = zeros(nchunk,1); threshold = zeros(nchunk,1); numAnom = zeros(nchunk,1); % Incremental fitting rng("default"); % For reproducibility for j = 1:nchunk ibegin = min(n,numObsPerChunk*(j-1) + 1); iend = min(n,numObsPerChunk*j); idx = ibegin:iend; [IncrementalMdl,tf,scores] = fit(IncrementalMdl,Xstream(idx,:)); medianscore(j) = median(scores); numAnom(j) = sum(tf); threshold(j) = IncrementalMdl.ScoreThreshold; end

Analyze Incremental Model During Training

To see how the median score, score threshold, and number of detected anomalies per chunk evolve during training, plot them on separate tiles.

tiledlayout(3,1); nexttile plot(medianscore) ylabel("Median Score") xlabel("Iteration") xlim([0 nchunk]) nexttile plot(threshold) ylabel("Score Threshold") xlabel("Iteration") xlim([0 nchunk]) nexttile plot(numAnom,"+") ylabel("Number of Anomalies") xlabel("Iteration") xlim([0 nchunk]) ylim([0 max(numAnom)+0.2])

totalAnomalies=sum(numAnom)

totalAnomalies = 16

anomfrac= totalAnomalies/n

anomfrac = 0.0011

The median score remains relatively constant at 26 for the first 58 iterations, after which it begins to rise. After 9 iterations, the score threshold begins to steadily drop from its initial value of 0. The software detects 16 anomalies in the Xstream data, yielding a total contamination fraction of 0.0011. You can suppress the output of scores and anomalies returned by fit during the initial iterations of incremental learning, when the model is still approaching a steady state, by specifying ScoreWarmupPeriod > 0 when you create IncrementalMdl using incrementalLearner.

Train a one-class SVM model by using ocsvm, and convert it to an incremental learner model that uses the stochastic gradient descent solver. Fit the incremental learner model to streaming data, and detect anomalies. Transfer training options from traditional to incremental learning.

Load Data

Load the 1994 census data stored in census1994.mat. The data set consists of demographic data from the US Census Bureau.

load census1994.matThe fit function of incrementalOneClassSVM does not support categorical predictors and does not use observations with missing values. Remove missing values in the data to reduce memory consumption and speed up training.

adultdata = rmmissing(adultdata); adulttest = rmmissing(adulttest);

Remove the categorical predictors.

Xtrain = removevars(adultdata,["workClass","education","marital_status", ... "occupation","relationship","race","sex","native_country","salary"]); Xstream = removevars(adulttest,["workClass","education","marital_status", ... "occupation","relationship","race","sex","native_country","salary"]);

Train One-Class SVM Model

Fit a one-class SVM model to the training data. Specify a random stream for reproducibility, and an anomaly contamination fraction of 0.001. Set KernelScale to "auto" so that the software selects an appropriate kernel scale parameter using a heuristic procedure.

rng(0,"twister"); % For reproducibility TTMdl = ocsvm(Xtrain,ContaminationFraction=0.001, ... KernelScale="auto",RandomStream=RandStream("mlfg6331_64"), ... StandardizeData=true)

TTMdl =

OneClassSVM

CategoricalPredictors: []

ContaminationFraction: 1.0000e-03

ScoreThreshold: 0.1013

PredictorNames: {'age' 'fnlwgt' 'education_num' 'capital_gain' 'capital_loss' 'hours_per_week'}

KernelScale: 2.6954

Lambda: 0.1600

Properties, Methods

TTMdl is a OneClassSVM model object representing a traditionally trained one-class SVM model.

Convert Trained Model

Convert the traditionally trained one-class SVM model to a one-class SVM model for incremental learning. Specify the standard SGD solver and an estimation period of 5000 observations (the default is 1000 when a learning rate is required).

IncrementalMdl = incrementalLearner(TTMdl,Solver="sgd", ... EstimationPeriod=5000); details(IncrementalMdl)

incrementalOneClassSVM with properties:

KernelScale: 2.6954

Lambda: 0.1600

NumExpansionDimensions: 256

SolverOptions: [1×1 struct]

Solver: 'sgd'

FittedLoss: 'hinge'

Mu: [38.4379 1.8979e+05 10.1213 1.0920e+03 88.3725 40.9312]

Sigma: [13.1347 1.0565e+05 2.5500 7.4063e+03 404.2984 11.9800]

EstimationPeriod: 5000

IsWarm: 0

ContaminationFraction: 1.0000e-03

NumTrainingObservations: 0

NumPredictors: 6

ScoreThreshold: 0.1021

ScoreWarmupPeriod: 0

PredictorNames: {'age' 'fnlwgt' 'education_num' 'capital_gain' 'capital_loss' 'hours_per_week'}

ScoreWindowSize: 1000

Methods, Superclasses

IncrementalMdl is an incrementalOneClassSVM model object that is ready for incremental learning and anomaly detection.

Fit Incremental Model and Detect Anomalies

Perform incremental learning on the Xstream data by using the fit function. To simulate a data stream, fit the model in chunks of 100 observations at a time. At each iteration:

Process 100 observations.

Overwrite the previous incremental model with a new one fitted to the incoming observations.

Store

medianscore, the median score value of the data chunk, to see how it evolves during incremental learning.Store

threshold, the score threshold value for anomalies, to see how it evolves during incremental learning.Store

numAnom, the number of detected anomalies in the chunk, to see how it evolves during incremental learning.

n = numel(Xstream(:,1)); numObsPerChunk = 100; nchunk = floor(n/numObsPerChunk); medianscore = zeros(nchunk,1); threshold = zeros(nchunk,1); numAnom = zeros(nchunk,1); % Incremental fitting for j = 1:nchunk ibegin = min(n,numObsPerChunk*(j-1) + 1); iend = min(n,numObsPerChunk*j); idx = ibegin:iend; [IncrementalMdl,tf,scores] = fit(IncrementalMdl,Xstream(idx,:)); medianscore(j) = median(scores); numAnom(j) = sum(tf); threshold(j) = IncrementalMdl.ScoreThreshold; end

Analyze Incremental Model During Training

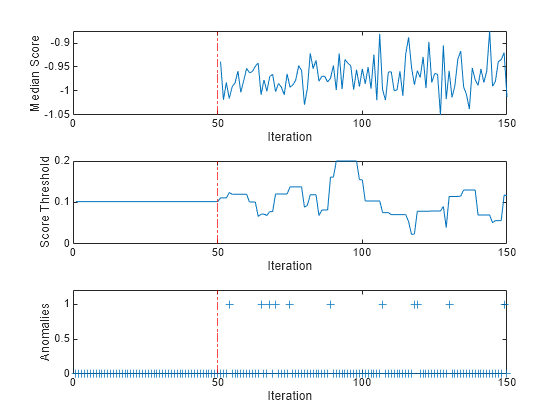

To see how the median score, score threshold, and number of detected anomalies per chunk evolve during training, plot them on separate tiles.

tiledlayout(3,1); nexttile plot(medianscore) ylabel("Median Score") xlabel("Iteration") xline(IncrementalMdl.EstimationPeriod/numObsPerChunk,"r-.") xlim([0 nchunk]) nexttile plot(threshold) ylabel("Score Threshold") xlabel("Iteration") xline(IncrementalMdl.EstimationPeriod/numObsPerChunk,"r-.") xlim([0 nchunk]) nexttile plot(numAnom,"+") ylabel("Anomalies") xlabel("Iteration") xline(IncrementalMdl.EstimationPeriod/numObsPerChunk,"r-.") xlim([0 nchunk]) ylim([0 max(numAnom)+0.2])

totalanomalies=sum(numAnom)

totalanomalies = 11

anomfrac= totalanomalies/(n-IncrementalMdl.EstimationPeriod)

anomfrac = 0.0011

During the estimation period, fit estimates the learning rate using the observations, and does not fit the model or update the score threshold. After the estimation period, fit updates the model and returns the observation scores and the indices of observations with scores above the score threshold value as anomalies. A negative score value with large magnitude indicates a normal observation, and a large positive value indicates an anomaly. The median score fluctuates between approximately 1 and 0.9. The score threshold fluctuates between 0.02 and 0.2. The software detects 11 anomalies in the Xstream data after the estimation period, yielding a total contamination fraction of 0.0011.

Input Arguments

Traditionally trained one-class SVM model for anomaly detection, specified as a

OneClassSVM

model object returned by ocsvm.

Note

Incremental learning functions support only numeric input

predictor data. If Mdl was trained on categorical data, you must prepare an

encoded version of the categorical data to use incremental learning functions. Use dummyvar to convert each categorical variable to a numeric matrix of dummy

variables. Then, concatenate all dummy variable matrices and any other numeric predictors, in

the same way that the training function encodes categorical data. For more details, see Dummy Variables.

Name-Value Arguments

Specify optional pairs of arguments as

Name1=Value1,...,NameN=ValueN, where Name is

the argument name and Value is the corresponding value.

Name-value arguments must appear after other arguments, but the order of the

pairs does not matter.

Example: Solver="scale-invariant",ScoreWarmupPeriod=500 specifies the

adaptive scale-invariant solver for objective optimization, and specifies processing 500

observations before the incremental fit function

returns scores and detects anomalies.

General Options

Number of observations processed by the incremental learner to estimate hyperparameters prior to training, specified as a nonnegative integer.

When processing observations during the estimation period, the software ignores observations that contain at least one missing value.

If

Mdlis prepared for incremental learning (all hyperparameters required for training are specified),incrementalLearnerforcesEstimationPeriodto0.If

Mdlis not prepared for incremental learning,incrementalLearnersetsEstimationPeriodto1000and estimates the unknown hyperparameters.

For more details, see Estimation Period.

Example: EstimationPeriod=500

Data Types: single | double

This property is read-only.

Objective function minimization technique, specified as a value in this table.

| Value | Description | Notes |

|---|---|---|

"scale-invariant" | Adaptive scale-invariant solver for incremental learning [1] |

|

"sgd" | Stochastic gradient descent (SGD) [3][2] |

|

"asgd" | Average stochastic gradient descent (ASGD) [4] |

|

Data Types: char | string

SGD and ASGD Solver Options

Mini-batch size for the stochastic solvers, specified as a positive integer. This

argument is not valid when Solver is

"scale-invariant".

At each learning cycle during training, incrementalLearner uses

BatchSize observations to compute the subgradient. The number of

observations for the last mini-batch (last learning cycle in each function call of

fit) can be smaller than

BatchSize. For example, if you specify

BatchSize = 10 and supply 25 observations to

fit, the function uses 10 observations for the first two

learning cycles and uses 5 observations for the last learning cycle.

Example: BatchSize=5

Data Types: single | double

Initial learning rate, specified as "auto" or a positive

scalar. This argument is not valid when Solver is

"scale-invariant".

The learning rate controls the optimization step size by scaling the objective

subgradient. LearnRate specifies an initial value for the

learning rate, and LearnRateSchedule

determines the learning rate for subsequent learning cycles.

When you specify "auto":

The initial learning rate is

0.7.If

EstimationPeriod>0,fitchanges the rate to1/sqrt(1+max(sum(X.^2,2)))at the end ofEstimationPeriod, whereXis the predictor data collected during the estimation period.

Example: LearnRate=0.1

Data Types: single | double | char | string

Learning rate schedule, specified as

"decaying" or "constant", where LearnRate specifies

the initial learning rate ɣ0.

| Value | Description |

|---|---|

"constant" | The learning rate is ɣ0 for all learning cycles. |

"decaying" | The learning rate at learning cycle t is

|

Example: LearnRateSchedule="constant"

Data Types: char | string

Adaptive Scale-Invariant Solver Options

This property is read-only.

Flag for shuffling the observations at each iteration, specified as a value in this table.

| Value | Description |

|---|---|

1 (true) | The software shuffles the observations in an incoming chunk of

data before the fit function fits the model. This

action reduces bias induced by the sampling scheme. |

0 (false) | The software processes the data in the order received. |

This option is valid only when Solver is

"scale-invariant". When Solver is

"sgd" or "asgd", the software always shuffles

the observations in an incoming chunk of data before processing the data.

Example: Shuffle=false

Data Types: logical

Anomaly Score Options

Warm-up period before score output and anomaly detection (outside the estimation

period, if EstimationPeriod > 0), specified as

a nonnegative integer. The ScoreWarmupPeriod value is the number

of observations to which the incremental model must be fit before the incremental

fit

function returns scores and detects anomalies.

Note

When processing observations during the score warm-up period, the software ignores observations that contain at least one missing value.

Data Types: single | double

Running window size used to estimate the score threshold

(ScoreThreshold), specified as a positive integer. The default

ScoreWindowSize value is 1000.

If ScoreWindowSize is greater than the number of observations

in the training data, the software determines ScoreThreshold by

subsampling from the training data. Otherwise, ScoreThreshold is

set to Mdl.ScoreThreshold.

Example: ScoreWindowSize=100

Data Types: single | double

Output Arguments

One-class SVM model for incremental anomaly detection, returned as an incrementalOneClassSVM model object.

To initialize IncrementalMdl for incremental anomaly detection,

incrementalLearner passes the values of the following properties of

Mdl to the corresponding properties of

IncrementalMdl.

| Property | Description |

|---|---|

ContaminationFraction | Fraction of anomalies in the training data, a numeric scalar in the range

[0,1] |

KernelScale | Kernel scale parameter, a positive scalar |

Lambda | Ridge (L2) regularization term strength, a nonnegative

scalar. incrementalLearner sets

IncrementalMdl.Lambda to NaN if

Solver is "scale-invariant". |

Mu | Predictor means of the training data, a numeric vector |

NumExpansionDimensions | Number of dimensions of the expanded space, a positive integer |

PredictorNames | Predictor variable names, a cell array of character vectors |

ScoreThreshold | Threshold score for anomalies in the training data, a numeric scalar in

the range (–Inf,Inf). If

ScoreWindowSize is greater than the number of

observations used to train Mdl, then

incrementalLearner approximates

ScoreThreshold by subsampling from the training data.

Otherwise, incrementalLearner passes

Mdl.ScoreThreshold to

IncrementalMdl.ScoreThreshold. |

Sigma | Predictor standard deviations of the training data, a numeric vector |

More About

Incremental learning, or online learning, is a branch of machine learning concerned with processing incoming data from a data stream, possibly given little to no knowledge of the distribution of the predictor variables, aspects of the prediction or objective function (including tuning parameter values), or whether the observations contain anomalies. Incremental learning differs from traditional machine learning, where enough data is available to fit to a model, perform cross-validation to tune hyperparameters, and infer the predictor distribution.

Anomaly detection is used to identify unexpected events and departures from normal behavior. In situations where the full data set is not immediately available, or new data is arriving, you can use incremental learning for anomaly detection to incrementally train a model so it adjusts to the characteristics of the incoming data.

Given incoming observations, an incremental learning model for anomaly detection does the following:

Computes anomaly scores

Updates the anomaly score threshold

Detects data points above the score threshold as anomalies

Fits the model to the incoming observations

For more information, see Incremental Anomaly Detection with MATLAB.

The adaptive scale-invariant solver for incremental learning, introduced in [1], is a gradient-descent-based objective solver for training linear predictive models. The solver is hyperparameter free, insensitive to differences in predictor variable scales, and does not require prior knowledge of the distribution of the predictor variables. These characteristics make it well suited to incremental learning.

The standard SGD and ASGD solvers are sensitive to differing scales among the predictor variables, resulting in models that can perform poorly. To achieve better accuracy using SGD and ASGD, you can standardize the predictor data, and tune the regularization and learning rate parameters. For traditional machine learning, enough data is available to enable hyperparameter tuning by cross-validation and predictor standardization. However, for incremental learning, enough data might not be available (for example, observations might be available only one at a time) and the distribution of the predictors might be unknown. These characteristics make parameter tuning and predictor standardization difficult or impossible to do during incremental learning.

The incremental fitting function for anomaly detection fit uses the more conservative

ScInOL1 version of the algorithm.

Algorithms

During the estimation period, the incremental fitting function fit uses the first incoming

EstimationPeriod observations

to estimate (tune) hyperparameters required for incremental training. Estimation occurs only

when EstimationPeriod is positive. This table describes the

hyperparameters and when they are estimated, or tuned.

| Hyperparameter | Model Property | Usage | Conditions |

|---|---|---|---|

| Predictor means and standard deviations |

| Standardize predictor data | The hyperparameters are estimated when both of these conditions apply:

|

| Learning rate | LearnRate

| Adjust the solver step size | The hyperparameter is estimated when both of these conditions apply:

|

| Kernel scale parameter | KernelScale | Set a kernel scale parameter value for random feature expansion | The hyperparameter is estimated when you set KernelScale

to "auto". |

During the estimation period,

fit does not fit the model. At the end of the estimation period,

the function updates the properties that store the hyperparameters.

If incremental learning functions are configured to standardize predictor variables,

they do so using the means and standard deviations stored in the Mu and

Sigma properties of the incremental learning model

IncrementalMdl.

If you standardize the predictor data when you train the input model

Mdlby usingocsvm, the following conditions apply:incrementalLearnerpasses the means inMdl.Muand standard deviations inMdl.Sigmato the corresponding incremental learning model properties.Incremental learning functions always standardize the predictor data.

When the incremental fitting function estimates predictor means and standard deviations, the function computes weighted means and weighted standard deviations using the estimation period observations. Specifically, the function standardizes predictor j (xj) using

where

xj is predictor j, and xjk is observation k of predictor j in the estimation period.

where

pk is the prior probability of class k (

Priorproperty of the incremental model).wj is observation weight j.

References

[1] Kempka, Michał, Wojciech Kotłowski, and Manfred K. Warmuth. "Adaptive Scale-Invariant Online Algorithms for Learning Linear Models." Preprint, submitted February 10, 2019. https://arxiv.org/abs/1902.07528.

[2] Langford, J., L. Li, and T. Zhang. “Sparse Online Learning Via Truncated Gradient.” J. Mach. Learn. Res., Vol. 10, 2009, pp. 777–801.

[3] Shalev-Shwartz, S., Y. Singer, and N. Srebro. “Pegasos: Primal Estimated Sub-Gradient Solver for SVM.” Proceedings of the 24th International Conference on Machine Learning, ICML ’07, 2007, pp. 807–814.

[4] Xu, Wei. “Towards Optimal One Pass Large Scale Learning with Averaged Stochastic Gradient Descent.” CoRR, abs/1107.2490, 2011.

Version History

Introduced in R2023b

See Also

Functions

Objects

MATLAB Command

You clicked a link that corresponds to this MATLAB command:

Run the command by entering it in the MATLAB Command Window. Web browsers do not support MATLAB commands.

Select a Web Site

Choose a web site to get translated content where available and see local events and offers. Based on your location, we recommend that you select: .

You can also select a web site from the following list

How to Get Best Site Performance

Select the China site (in Chinese or English) for best site performance. Other MathWorks country sites are not optimized for visits from your location.

Americas

- América Latina (Español)

- Canada (English)

- United States (English)

Europe

- Belgium (English)

- Denmark (English)

- Deutschland (Deutsch)

- España (Español)

- Finland (English)

- France (Français)

- Ireland (English)

- Italia (Italiano)

- Luxembourg (English)

- Netherlands (English)

- Norway (English)

- Österreich (Deutsch)

- Portugal (English)

- Sweden (English)

- Switzerland

- United Kingdom (English)