Results for

If you have published add-ons on File Exchange, you may have noticed that we recently added a new, unique package name field to all add-ons. This enables future support for automated installation with the MATLAB Package Manager. This name will be a unique identifier for your add-on and does not affect the existing add-on title, any file names, or the URL of your add-on.

📝 Update and review until April 10

We generated default package names for all add-ons. You can review and update the package name for your add-ons until April 10, 2026. Review your package names now:

After April 10, you will need to create a new version to change your package name.

🚀 More changes coming with the MATLAB R2026b prerelease

Starting with the MATLAB R2026b prerelease, these package names will take effect. At that time, the package name may appear on the File Exchange page for your add-on.

Keep your eyes peeled for exciting changes coming soon to your add-ons on File Exchange!

Cantera is an open-source suite of tools for problems involving chemical kinetics, thermodynamics, and transport processes. Dr. Su Sun, a recent graduate from Northeastern Chemical Engineering Ph.D. program made significant contributions to MATLAB interface for Cantera in Cantera Release 3.2.0 in collaboration with Dr. Richard West, other Cantera developers, and MathWorks Advanced Support and Development Teams. As part of this Release, MATLAB interface for Cantera transitioned to using the new MATLAB- C++ interface and expanded their unit testing. Further information is available here.

I began coding in MATLAB less than 2 months ago for a class at community college. Alongside the course content, I also completed the MATLAB onramp and introduction to linear algebra self-paced online courses. I think this is the most fun I've had coding since back when I used to make Scratch projects in elementary school. I'm kind of curious if I could recreate some of my favorite childhood Scratch games here.

Anyways, I just wanted to introduce myself since I plan to be really active this year. My name is Mehreen (meh like the meh emoji from the Emoji movie, reen like screen), I'm a data science undergrad sophomore from the U.S. and it's nice to meet you!

Dear all,

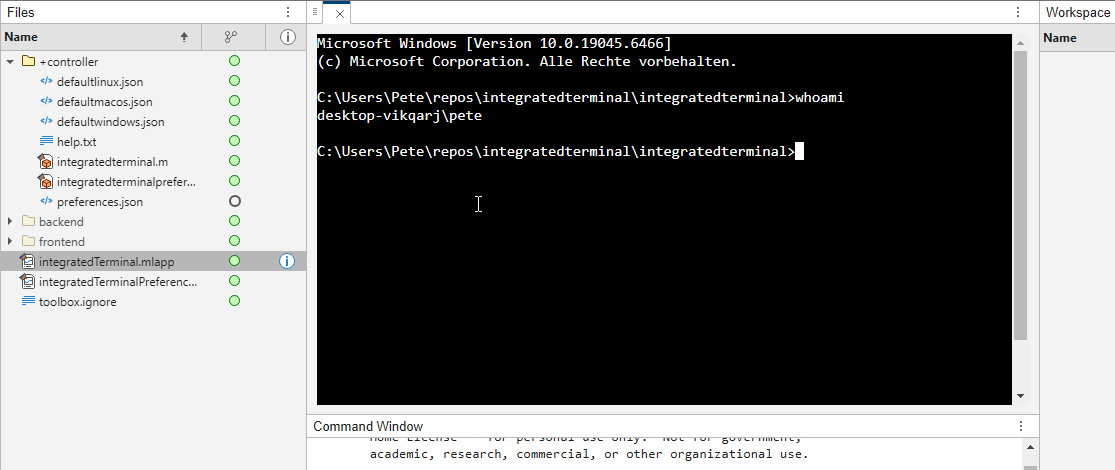

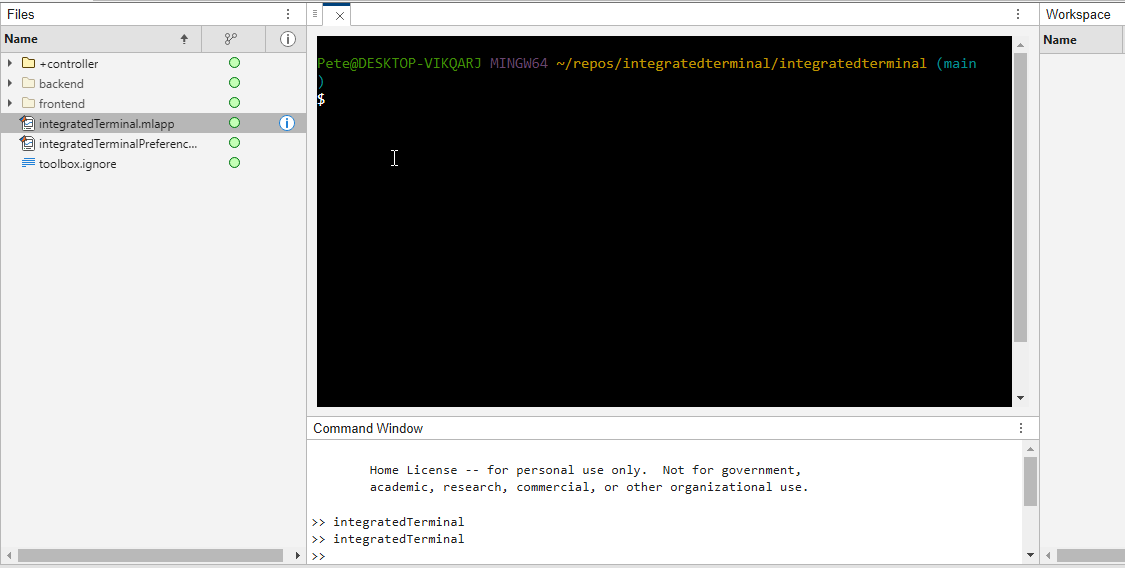

Recently I started working on a VS Code-style integrated terminal for the MATLAB IDE.

The terminal is installed as an app and runs inside a docked figure. You can launch the terminal by clicking on the app icon, running the command integratedTerminal or via keyboard shortcut.

It's possible to change the shell which is used. For example, I can set the shell path to C://Git//bin//bash.exe and use Git Bash on Windows. You can also change the theme. You can run multiple terminals.

I hope you like it and any feedback will be much appreciated. As soon as it's stable enough I can release it as a toolbox.

An emirp is a prime that is prime when viewed in in both directions. They are not too difficult to find at a lower level. For example...

isprime([199 991])

Gosh, that was easy. But what happens if the number is a bit larger? The problem is, primes themselves tend to be rare on the number line when you get into thousands or tens of thousands of decimal digits. And recently, I read that a world record size prime had been found in this form. You have probably all heard of Matt Parker and numberphile.

And so, I decided that MATLAB would be capable of doing better. Why not? After all, at the time, the record size emirp had only 10002 decimal digits.

How would I solve this problem? First, we can very simply write a potential emirp as

10^n + a

then we can form the flipped version as

ahat*10^(n-d) + 1

where ahat is the decimally flipped version of a, and d is chosen based on the number of decimal digits in the number a itself. Not all emirps will be of that form of course, but using all of those powers of 10 makes it easy to construct a large number and its reversed form. And that is a huge benefit in this. For example,

Pfor = sym(10)^101 + 943

Prev = 349*sym(10)^99 + 1

It is easier to view these numbers using a little code I wrote, one that redacts most of those boring zeros.

emirpdisplay(Pfor)

emirpdisplay(Prev)

And yes, they are both prime, and they both have 102 decimal digits.

isprime([Pfor,Prev])

Sadly, even numbers that large are very small potatoes, at least in the world of large primes. So how do we solve for a much larger prime pair using MATLAB?

The first thing I want to do is to employ roughness at a high level. If a number is prime, then it is maximally rough. (I posted a few discussions about roughness some time ago.)

https://www.mathworks.com/matlabcentral/discussions/tips/879745-primes-and-rough-numbers-basic-ideas

In this case, I'm going to look for serious roughness, thus 2e9-rough numbers. Again, a number is k-rough if its smallest prime factor is greater than k. There are roughly 98 million primes below 2e9.

The general idea is to compute the remainders of 10^12345, modulo every prime in that set of primes below 2e9. This MUST be done using int64 or uint64 arithmetic, as doubles will start to fail you above

format short g

sqrt(flintmax)

The sqrt is in there because we will be multiplying numbers together here, and we need always to stay below intmax for the integer format you are working with. However, if we work in an integer format, we can get as high as 2e9 easily enough, by going to int64 or uint64.

sqrt(double(intmax('int64')))

And, yes, this means I could have gone as high as primes(3e9), however, I stopped at 2e9 due to the amount of RAM on my computer. 98 million primes seemed enough for this task. And even then, I found myself working with all of the cores on my computer. (Note that I found int64 arithmetic will only fire up the performance cores on your Mac via automatic multi-threading. Mine has 12 performance cores, even though it has 16 total cores.)

I computed the remainders of 10^12345 with respect to each prime in that set using a variation of the powermod algorithm. (Not powermod itself, which was itself not sufficiently fast for my purposes.) Once I had those 98 millin remainders in a vector, then it became easy to use a variation of the sieve of Eratosthenes to identify 2e9-rough numbers.

For example, working at 101 decimal digits, if I search for primes of the form 10^101+a, with a in the interval [1,10000], there are 256 numbers of that form which are 2e9-rough. Roughness is a HUGE benefit, since as you can see here, I would not want to test for primality all 10000 possible integers from that interval.

Next, I flip those 256 rough numbers into their mirror image form. Which members of that set are also rough in the mirror image form? We would then see this further reduces the set to only 34 candidates we need test for primality which were rough in both directions. With now only a few direct tests for primality, we would find that pair of 102 digit primes shown above.

Of course, I'm still needing to work with primes in the regime of 10000 plus decimal digits, and that means I need to be smarter about how I test a number to be prime. The isprime test given by sym/isprime only survives out to around 1000 decimal digits before it starts to get too slow. That means I need to perform Fermat tests to screen numbers for primality. If that indicates potential primality, I currently use a Miller-Rabin code to verify that result, one based on the tool Java.Math.BigInteger.isProbablePrime.

And since Wikipedia tells me the current world record known emirp was

117,954,861 * 10^11111 + 1 discovered by Mykola Kamenyuk

that tells me I need to look further out yet. I chose an exponent of 12345, so starting at 10^12345. Last night I set my Mac to work, with all cores a-fumbling, a-rumbling at the task as I slept. Around 4 am this morning, it found this number:

emirp = @(N,a) sym(10)^N + a;

Pfor = emirp(12345,10519197);

Prev = sym(flip(char(Pfor)));

emirpdisplay(Pfor)

emirpdisplay(Prev)

isProbablePrimeFLT([Pfor,Prev],210)

I'm afraid you will need to take my word for it that both also satisfy a more robust test of primality, as even a Miller-Rabin test that will take more time than the MATLAB version we get for use in a discussion will allow. As far as a better test in the form of the MATLAB isprime utility to verify true primality, that test is still running on my computer. I'll check back in a few hours to see if it fininshed.

Anyway, the above numbers now form the new world record known emirp pair, at 12346 decimal digits. Yes, I do recognize this is still what I would call low hanging fruit, that having announced a largest prime of this form, someone else willl find one yet larger in a few weeks or months. But even so, for the moment, MATLAB owns the world record!

If anyone else wants a version of the codes I used for the search, I've attached a version (emirpsearchpar.m) that employs the parallel processing toolbox. I do have as well a serial version which is of course, much, much slower. It would be fun to crowd source a larger world record yet from the MATLAB community.

Hey folks in MATLAB community! I'm an engineering student from India messing around with deep learning/ML for spotting faults in power electronics stuff—like inverter issues or microgrid glitches in Simulink.

What's your take?

- Which toolbox rocks for this—Deep Learning one or Predictive Maintenance?

- Any gotchas when training on sim data vs real hardware?

- Cool workflows or GitHub links you've used?

Would love your real experiences! 😊

The "Issues" with Constant Properties

MATLABs Constant properties can be rather difficult to deal with at times. For those unfamiliar there are two distinct behaviors when accessing constant properties of a class. If a "static" pattern is used ClassName.PropName the the value, as it was assigned to the property is returned; that is to say that you will have a nargout of 1. But, rather frustratingly, if an instance of the class is used when accessing the constant property, such as ArrayOfClassName.PropName then your nargout will be equivalent to the number of elements in the array; this means that functionally the constant property accessing scheme is identical to that of the element wise properties you find on "instance" properties.

Motivation for Correcting Constant Property Behavior

This can be frustraing since constant properties are conceptually designed to tie data to a class. You could see this design pattern being useful where a super class were to define an abstract constant property, that would drive the behavior of subclasses; the subclasses define the value of the property and the super class uses it. I would like to use this design to develop a custom display "MatrixDisplay" focused mixin (like the internal matlab.mixin.internal.MatrixDisplay). The idea is that missing element labels, invalid handle element labels, and other semantic values can be configured by the subclasses, conveniently just by setting the constant properties; these properties will be used by the super class to substitute the display strings of appropriate elements. Most of the processing will happen within the super class as to enable simple, low-investment, opt-in display options for array style classes.

The issue is that you can not rely on constant property access to return the appropriate value when the instance you've been passed is empty. This also happens with excessive outputs when the instance is non-scalar, but those extra values from the CSL are just ignored, while Id imagine there is an effect on performance from generating the excess outputs (assuming theres no internal optimization for the unused outputs), this case still functions appropriately. As I enjoying exploring MATLAB, I found an internal indexing mixing class in the past that provides far greater control of do indexing; I've done a good deal of neat things with it, though at the cost of great overhead when getting implementing cool "proof of concept/showcase" examples. Today I used it to quickly implement a mix in that "fixes" constant properties such that they always return as though they were called statically from the class name, as opposed to an instance.

A Simplistic Solution

To do this I just intercepted the property indexing, checked if it was constant, and used the DefaultValue property of the metadata to return the value. This works nicely since we are required to attempt to initialize a "dummy" scalar array, or generate a function handle; both of those would likely be slower, and in the former case, may not be possible depending on the subclass implementation. It is worth noting that this method of querying the value from metadata is safe because constant properties are immutable and thus must be established as the class is loaded. Below is the small utility class I have implemented to get predictable constant variable access into classes that benefit from it. Lastly it is worth noting that I've not torture testing the rerouting of the indexing we aren't intercepting, in my limited play its behaved as expected but it may be worth looking over if you end up playing around with this and notice abnormal property assignment or reading from non-constant properties.

Sample Class Implementation

classdef(Abstract, HandleCompatible) ConstantProperty < matlab.mixin.internal.indexing.RedefinesDotProperties

%ConstantProperty Returns instance indexed constant properties as though they were statically indexed.

% This class overloads property access to check if the indexed property is constant and return it properly.

%% Property membership utility methods

methods(Access=private)

function [isConst, isProp] = isConstantProp(obj, prop, options)

%isConstantProp Determine if the input property names are constant properties of the input

arguments

obj mixin.ConstantProperty;

prop string;

options.Flatten (1, 1) logical = false;

end

% Store the cache to avoid rechecking string membership and parsing metadata

persistent class_cache

% Initialize cache for all subclasses to maintain their own caches

if(isempty(class_cache))

class_cache = configureDictionary("string", "dictionary");

end

% Gather the current class being analyzed

classname = string(class(obj));

% Check if the current class has a cache, if not make one

if(~isKey(class_cache, classname))

class_cache(classname) = configureDictionary("string", "struct");

end

% Alias the current classes cache

prop_cache = class_cache(classname);

% Check which inputs are already cached

isCached = isKey(prop_cache, prop);

% Add any values that have yet to be cached to the cache

if(any(~isCached, "all"))

% Flatten cache additions

props = row(prop(~isCached));

% Gather the meta-property data of the input object and determine if inputs are listed properties

mc_props = metaclass(obj).PropertyList;

% Determine which properties are keys

[isConst, idx] = ismember(props, string({mc_props.Name}));

idx = idx(isConst);

% Check which of the inputs are constant properties

isConst = repmat(isConst, 2, 1);

isConst(1, isConst(1, :)) = [mc_props(idx).Constant];

% Parse the results into structs for caching

cache_values = cell2struct(num2cell(isConst), ["isConst"; "isProp"]);

prop_cache(props) = row(cache_values);

% Re-sync the cache

class_cache(classname) = prop_cache;

end

% Extract results from the cache

values = prop_cache(prop);

if(options.Flatten)

% Split and reshape output data

sz = size(prop);

isConst = reshape(values.isConst, sz);

isProp = reshape(values.isProp, sz);

else

isConst = struct2cell(values);

end

end

function [isConst, isProp] = isConstantIdxOp(obj, idxOp)

%isConstantIdxOp Determines if the idxOp is referencing a constant property.

arguments

obj mixin.ConstantProperty;

idxOp (1, :) matlab.indexing.IndexingOperation;

end

import matlab.indexing.IndexingOperationType;

if(idxOp(1).Type == IndexingOperationType.Dot)

[isConst, isProp] = isConstantProp(obj, idxOp(1).Name);

else

[isConst, isProp] = deal(false);

end

end

function A = getConstantProperty(obj, idxOp)

%getConstantProperty Returns the value of a constant property using a static reference pattern.

arguments

obj mixin.ConstantProperty;

idxOp (1, :) matlab.indexing.IndexingOperation;

end

A = findobj(metaclass(obj).PropertyList, "Name", idxOp(1).Name).DefaultValue;

end

end

%% Dot indexing methods

methods(Access = protected)

function A = dotReference(obj, idxOp)

arguments(Input)

obj mixin.ConstantProperty;

idxOp (1, :) matlab.indexing.IndexingOperation;

end

arguments(Output, Repeating)

A

end

% Force at least one output

N = max(1, nargout);

% Check if the indexing operation is a property, and if that property is constant

[isConst, isProp] = isConstantIdxOp(obj, idxOp);

if(~isProp)

% Error on invalid properties

throw(MException( ...

"JB:mixin:ConstantProperty:UnrecognizedProperty", ...

"Unrecognized property '%s'.", ...

idxOp(1).Name ...

));

elseif(isConst)

% Handle forwarding indexing operations

if(isscalar(idxOp))

% Direct assignment

[A{1:N}] = getConstantProperty(obj, idxOp);

else

% First extract constant property then forward indexing operations

tmp = getConstantProperty(obj, idxOp);

[A{1:N}] = tmp.(idxOp(2:end));

end

else

% Handle forwarding indexing operations

if(isscalar(idxOp))

% Unfortunately we can't just recall obj.(idxOp) to use default/built-in so we manually extract

[A{1:N}] = obj.(idxOp.Name);

else

% Otherwise let built-in handling proceed

tmp = obj.(idxOp(1).Name);

[A{1:N}] = tmp.(idxOp(2:end));

end

end

end

function obj = dotAssign(obj, idxOp, values)

arguments(Input)

obj mixin.ConstantProperty;

idxOp (1, :) matlab.indexing.IndexingOperation;

end

arguments(Input, Repeating)

values

end

% Handle assignment based on presence of forward indexing

if(isscalar(idxOp))

% Simple broadcasted assignment

[obj.(idxOp.Name)] = deal(values{:});

else

% Initialize the intermediate values and expand the values for assignment

tmp = {obj.(idxOp(1).Name)};

[tmp.(idxOp(2:end))] = deal(values{:});

% Reassign the modified data to the output object

[obj.(idxOp(1).Name)] = deal(tmp{:});

end

end

function n = dotListLength(obj, idxOp, idxCnt)

arguments(Input)

obj mixin.ConstantProperty;

idxOp (1, :) matlab.indexing.IndexingOperation;

idxCnt (1, :) matlab.indexing.IndexingContext;

end

if(isConstantIdxOp(obj, idxOp))

if(isscalar(idxOp))

% Constant properties will also be 1

n = 1;

else

% Checking forwarded indexing operations on the scalar constant property

n = listLength(obj.(idxOp(1).Name), idxOp(2:end), idxCnt);

end

else

% Check the indexing operation normally

% n = listLength(obj, idxOp, idxCnt);

n = numel(obj);

end

end

end

end

k-Wave is a MATLAB community toolbox with a track record that includes over 2,500 citations on Google Scholar and over 7,500 downloads on File Exchange. It is built for the "time-domain simulation of acoustic wave fields" and was recently highlighted as a Pick of the Week.

In December, release v1.4.1 was published on GitHub including two new features led by the project's core contributors with domain experts in this field. This first release in several years also included quality and maintainability enhancements supported by a new code contributor, GitHub user stellaprins, who is a Research Software Engineer at University College London. Her contributions in 2025 spanned several software engineering aspects, including the addition of continuous integration (CI), fixing several bugs, and updating date/time handling to use datetime. The MATLAB Community Toolbox Program sponsored these contributions, and welcomes to see them now integrated into a release for k-Wave users.

I'd like to share some work from Controls Educator and long term collabortor @Dr James E. Pickering from Harper Adams University. He is currently developing a teaching architecture for control engineering (ACE-CORE) and is looking for feedback from the engineering community.

ACE-CORE is delivered through ACE-Box, a modular hardware platform (Base + Sense, Actuate). More on the hardware here: What is the ACE-Box?

The Structure

(1) Comprehend

Learners build conceptual understanding of control systems by mapping block diagrams directly to physical components and signals. The emphasis is on:

- Feedback architecture

- Sensing and actuation

- Closed-loop behaviour in practical terms

(2) Operate

Using ACE-Box (initially Base + Sense), learners run real closed-loop systems. The learners measure, actuate, and observe real phenomena such as: Noise, Delay, Saturation

Engineering requirements (settling time, overshoot, steady-state error, etc.) are introduced explicitly at this stage.

After completing core activities (e.g., low-pass filter implementation or PID tuning), the pathway branches (see the attached diagram)

(3a) Refine (Option 1) Students improve performance through structured tuning:

- PID gains

- Filter coefficients

- Performance trade-offs

The focus is optimisation against defined engineering requirements.

(3b) Refine → Engineer (Option 2)

Modelling and analytical design become more explicit at this stage, including:

- Mathematical modelling

- Transfer functions

- System identification

- Stability analysis

- Analytical controller design

Why the Branching?

The structure reflects two realities:

- Engineers who operate and refine existing control systems

- Engineers who design control systems through mathematical modelling

Your perspective would be very valuable:

- Does this progression reflect industry reality?

- Is the branching structure meaningful?

- What blind spots do you see?

Constructive critique is very welcome. Thank you!

I was reading Yann Debray's recent post on automating documentation with agentic AI and ended up spending more time than expected in the comments section. Not because of the comments themselves, but because of something small I noticed while trying to write one. There is no writing assistance of any kind before you post. You type, you submit, and whatever you wrote is live.

For a lot of people that is fine. But MATLAB Central has users from all over the world, and I have seen questions on MATLAB Answers where the technical reasoning is clearly correct but the phrasing makes it hard to follow. The person knew exactly what they meant. The platform just did not help them say it clearly.

I want to share a few ideas around this. They are not fully formed proposals but I think the direction is worth discussing, especially given how much AI tooling MathWorks has built recently.

What the platform has today

When you write a post in Discussions or an answer in MATLAB Answers, the editor gives you basic formatting options. Code blocks, some text styling, that is mostly it. The AI Chat Playground exists as a separate tool, and MATLAB Copilot landed in R2025a for the desktop. But none of that is inside the editor where people actually write community content.

Four things are missing that I think would make a real difference.

Grammar and clarity checking before you post

Not a forced rewrite. Just an optional Check My Draft button that highlights unclear sentences or anything that might trip a reader up. The user reviews it, decides what to change, then posts.

What makes this different from plugging in Grammarly is that a general-purpose tool does not know that readtable is a MATLAB function. It does not know that NaN, inf, or linspace are not errors. A MATLAB-aware checker could flag things that generic tools miss, like someone writing readTable instead of readtable in a solution post.

The llms-with-matlab package already exists on GitHub. Something like this could be built on top of it with a prompt that includes MATLAB function vocabulary as context. That is not a large lift given what is already there.

Translation support

MATLAB Central already has a Japanese-language Discussions channel. That tells you something about the community. The platform is global but most of the technical content is in English, and there is a real gap there.

Two options that would help without being intrusive:

- Write in your language, click Translate, review the English version, then post. The user is still responsible for what goes live.

- A per-post Translate button so readers can view content in a language they are more comfortable with, without changing what is stored on the platform.

A student who has the right answer to a MATLAB Answers question might not post it because they are not confident writing in English. Translation support changes that. The community gets the answer and the contributor gets the credit.

In-editor code suggestions

When someone writes a solution post they usually write the code somewhere else, test it, copy it, paste it, and format it manually. An in-editor assistant that generates a starting scaffold from a plain-text description would cut that loop down.

The key word is scaffold, not a finished answer. The label should say something like AI-generated draft, verify before posting so it is clear the person writing is still accountable. MATLAB Copilot already does something close to this inside the desktop editor. Bringing a lighter version of it into the community editor feels like a natural extension of what already exists.

A note on feasibility

These ideas are not asking for something from scratch. MathWorks already has llms-with-matlab, the MCP Core Server, and MATLAB Copilot as infrastructure. Grammar checking and translation are well-solved problems at the API level. The MATLAB-specific vocabulary awareness is the part worth investing in. None of it should be on by default. All of it should be opt-in and clearly labeled when it runs.

One more thing: diagrams in posts

Right now the only way to include a diagram in a post is to make it externally and upload an image. A lightweight drag-and-drop diagram tool inside the editor would let people show a process or structure quickly without leaving the platform. Nothing complex, just boxes and arrows. For technical explanations it is often faster to draw than to write three paragraphs.

What I am curious about

I am a Data Science student at CU Boulder and an active MATLAB user. These ideas came up while using the platform, not from a product roadmap. I do not know what is already being discussed internally at MathWorks, so it is entirely possible some of this is in progress.

Has anyone else run into the same friction points when writing on MATLAB Central? And for anyone at MathWorks who works on the community platform, is the editor something that gets investment alongside the product tools?

Happy to hear where I am wrong on the feasibility side too.

AI assisted with grammar and framing. All ideas and editorial decisions are my own.

I wanted to share something I've been thinking about to get your reactions. We all know that most MATLAB users are engineers and scientists, using MATLAB to do engineering and science. Of course, some users are professional software developers who build professional software with MATLAB - either MATLAB-based tools for engineers and scientists, or production software with MATLAB Coder, MATLAB Compiler, or MATLAB Web App Server.

I've spent years puzzling about the very large grey area in between - engineers and scientists who build useful-enough stuff in MATLAB that they want their code to work tomorrow, on somebody else's machine, or maybe for a large number of users. My colleagues and I have taken to calling them "Reluctant Developers". I say "them", but I am 1,000% a reluctant developer.

I first hit this problem while working on my Mech Eng Ph.D. in the late 90s. I built some elaborate MATLAB-based tools to run experiments and analysis in our lab. Several of us relied on them day in and day out. I don't think I was out in the real world for more than a month before my advisor pinged me because my software stopped working. And so began a career of building amazing, useful, and wildly unreliable tools for other MATLAB users.

About a decade ago I noticed that people kept trying to nudge me along - "you should really write tests", "why aren't you using source control". I ignored them. These are things software developers do, and I'm an engineer.

I think it finally clicked for me when I listened to a talk at a MATLAB Expo around 2017. An aerospace engineer gave a talk on how his team had adopted git-based workflows for developing flight control algorithms. An attendee asked "how do you have time to do engineering with all this extra time spent using software development tools like git"? The response was something to the effect of "oh, we actually have more time to do engineering. We've eliminated all of the waste from our unamanaged processes, like multiple people making similar updates or losing track of the best version of an algorithm." I still didn't adopt better practices, but at least I started to get a sense of why I might.

Fast-forward to today. I know lots of users who've picked up software dev tools like they are no big deal, but I know lots more who are still holding onto their ad-hoc workflows as long as they can. I'm on a bit of a campaign to try to change this. I'd like to help MATLAB users recognize when they have problems that are best solved by borrowing tools from our software developer friends, and then give a gentle onramp to using these tools with MATLAB.

I recently published this guide as a start:

Waddya think? Does the idea of Reluctant Developer resonate with you? If you take some time to read the guide, I'd love comments here or give suggestions by creating Issues on the guide on GitHub (there I go, sneaking in some software dev stuff ...)

DocMaker allows you to create MATLAB toolbox documentation from Markdown documents and MATLAB scripts.

The MathWorks Consulting group have been using it for a while now, and so David Sampson, the director of Application Engineering, felt that it was time to share it with the MATLAB and Simulink community.

David listed its features as:

➡️ write documentation in Markdown not HTML

🏃 run MATLAB code and insert textual and graphical output

📜 no more hand writing XML index files

🕸️ generate documentation for any release from R2021a onwards

💻 view and edit documentation in MATLAB, VS Code, GitHub, GitLab, ...

🎉 automate toolbox documentation generation using MATLAB build tool

📃 fully documented using itself

😎 supports light, dark, and responsive modes

🐣 cute logo

I got an email message that says all the files I've uploaded to the File Exchange will be given unique names. Are these new names being applied to my files automatically? If so, do I need to download them to get versions with the new name so that if I update them they'll have the new name instead of the name I'm using now?

Currently, the open-source MATLAB Community is accessed via the desktop web interface, and the experience on mobile devices is not very good—especially switching between sections like Discussion, FEX, Answers, and Cody is awkward. Having a dedicated app would make using the community much more convenient on phones.

Similarty,github has Mobile APP, It's convient for me.

https://www.mathworks.com/matlabcentral/answers/2182045-why-can-t-i-renew-or-purchase-add-ons-for-m…

"As of January 1, 2026, Perpetual Student and Home offerings have been sunset and replaced with new Annual Subscription Student and Home offerings."

So, Perpetual licenses for Student and Home versions are no more. Also, the ability for Student and Home to license just MATLAB by itself has been removed.

The new offering for Students is $US119 per year with no possibility of renewing through a Software Maintenance Service type offering. That $US119 covers the Student Suite of MATLAB and Simulink and 11 other toolboxes. Before, the perpetual license was $US99... and was a perpetual license, so if (for example) you bought it in second year you could use it in third and fourth year for no additional cost. $US99 once, or $US99 + $US35*2 = $US169 (if you took SMS for 2 years) has now been replaced by $US119 * 3 = $US357 (assuming 3 years use.)

The new offering for Home is $US165 per year for the Suite (MATLAB + 12 common toolboxes.) This is a less expensive than the previous $US150 + $US49 per toolbox if you had a use for those toolboxes . Except the previous price was a perpetual license. It seems to me to be more likely that Home users would have a use for the license for extended periods, compared to the Student license (Student licenses were perpetual licenses but were only valid while you were enrolled in degree granting instituations.)

Unfortunately, I do not presently recall the (former) price for SMS for the Home license. It might be the case that by the time you added up SMS for base MATLAB and the 12 toolboxes, that you were pretty much approaching $US165 per year anyhow... if you needed those toolboxes and were willing to pay for SMS.

But any way you look at it, the price for the Student version has effectively gone way up. I think this is a bad move, that will discourage students from purchasing MATLAB in any given year, unless they need it for courses. No (well, not much) more students buying MATLAB with the intent to explore it, knowing that it would still be available to them when it came time for their courses.

I struggle with animations. I often want a simple scrollable animation and wind up having to export to some external viewer in some supported format. The new Live Script automation of animations fails and sabotages other methods and it is not well documented so even AIs are clueless how to resolve issues. Often an animation works natively but not with MATLAB Online. Animation of results seems to me rather basic and should be easier!

Frequently, I find myself doing things like the following,

xyz=rand(100,3);

XYZ=num2cell(xyz,1);

scatter3(XYZ{:,1:3})

But num2cell is time-consuming, not to mention that requiring it means extra lines of code. Is there any reason not to enable this syntax,

scatter3(xyz{:,1:3})

so that I one doesn't have to go through num2cell? Here, I adopt the rule that only dimensions that are not ':' will be comma-expanded.

(Requested for newer MATLAB releases (e.g. R2026B), MATLAB Parallel Processing toolbox.)

Lower precision array types have been gaining more popularity over the years for deep learning. The current lowest precision built-in array type offered by MATLAB are 8-bit precision arrays, e.g. int8 and uint8. A good thing is that these 8-bit array types do have gpuArray support, meaning that one is able to design GPU MEX codes that take in these 8-bit arrays and reinterpret them bit-wise as other 8-bit array types, e.g. FP8, which is especially common array type used in modern day deep learning applications. I myself have used this to develop forward pass operations with 8-bit precision that are around twice as fast as 16-bit operations and with output arrays that still agree well with 16-bit outputs (measured with high cosine similarity). So the 8-bit support that MATLAB offers is already quite sufficient.

Recently, 4-bit precision array types have been shown also capable of being very useful in deep learning. These array types can be processed with Tensor Cores of more modern GPUs, such as NVIDIA's Blackwell architecture. However, MATLAB does not yet have a built-in 4-bit precision array type.

Just like MATLAB has int8 and uint8, both also with gpuArray support, it would also be nice to have MATLAB have int4 and uint4, also with gpuArray support.

Our exportgraphics and copygraphics functions now offer direct and intuitive control over the size, padding, and aspect ratio of your exported figures.

- Specify Output Size: Use the new Width, Height, and Units name-value pairs

- Control Padding: Easily adjust the space around your axes using the Padding argument, or set it to to match the onscreen appearance.

- Preserve Aspect Ratio: Use PreserveAspectRatio='on' to maintain the original plot proportions when specifying a fixed size.

- SVG Export: The exportgraphics function now supports exporting to the SVG file format.

Check out the full article on the Graphics and App Building blog for examples and details: Advanced Control of Size and Layout of Exported Graphics

No, staying home (or where I'm now)

25%

Yes, 1 night

0%

Yes, 2 nights

12.5%

Yes, 3 nights

12.5%

Yes, 4-7 nights

25%

Yes, 8 nights or more

25%

8 votes