Overview of Differential Equations | Differential Equations and Linear Algebra

From the series: Differential Equations and Linear Algebra

Gilbert Strang, Massachusetts Institute of Technology (MIT)

Published: 27 Jan 2016

OK. Well, the idea of this first video is to tell you what's coming, to give a kind of outline of what is reasonable to learn about ordinary differential equations. And a big part of the series will be videos on first order equations and videos on second order equations. Those are the ones you see most in applications. And those are the ones you can understand and solve, when you're fortunate.

So first order equations means first derivatives come into the equation. So that's a nice equation that we will solve, we'll spend a lot of time on. The derivative is-- that's the rate of change of y-- the changes in the unknown y-- as time goes forward are partly from depending on the solution itself. That's the idea of a differential equation, that it connects the changes with the function y as it is.

And then you have inputs called q of t, which produce their own change. They go into the system. They become part of y. And they grow, decay, oscillate, whatever y of t does. So that is a linear equation with a right-hand side, with an input, a forcing term.

And here is a nonlinear equation. The derivative of y. The slope depends on y. So it's a differential equation. But f of y could be y squared over y cubed or the sine of y or the exponential of y. So it could be not linear. Linear means that we see y by itself. Here we won't. Well, we'll come pretty close to getting a solution, because it's a first order equation. And the most general first order equation, the function would depend on t and y. The input would change with time. Here, the input depends only on the current value of y.

I might think of y as money in a bank, growing, decaying, oscillating. Or I might think of y as the distance on a spring. Lots of applications coming.

OK. So those are first order equations. And second order have second derivatives. The second derivative is the acceleration. It tells you about the bending of the curve.

If I have a graph, the first derivative we know gives the slope of the graph. Is it going up? Is it going down? Is it a maximum?

The second derivative tells you the bending of the graph. How it goes away from a straight line. So and that's acceleration. So Newton's law-- the physics we all live with-- would be acceleration is some force. And there is a force that depends, again, linearly-- that's a keyword-- on y. Just y to the first power.

And here is a little bit more general equation. In Newton's law, the acceleration is multiplied by the mass. So this includes a physical constant here, the mass.

Then there could be some damping. If I have motion, there may be friction slowing it down. That depends on the first derivative, the velocity.

And then there could be the same kind of forced term that depends on y itself. And there could be some outside force, some person or machine that's creating movement. An external forcing term.

So that's a big equation. And let me just say, at this point, we let things be nonlinear. And we had a pretty good chance. If we get these to be non-linear, the chance at second order has dropped. And the further we go, the more we need linearity and maybe even constant coefficients. m, b, and k. So that's really the problem that we can solve as we get good at it is a linear equation-- second order, let's say-- with constant coefficients. But that's pretty much pushing what we can hope to do explicitly and really understand the solution, because so linear with constant coefficients. Say it again. That's the good equations.

And I think of solutions in two ways. If I have a really nice function like a exponential. Exponentials are the great functions of differential equations, the great functions in this series. You'll see them over and over. Exponentials. Say f of t equals-- e to the t. Or e to the omega t. Or e to the i omega t. That i is the square root of minus 1.

In those cases, we will get a similarly nice function for the solution. Those are the best. We get a function that we know like exponentials. And we get solutions that we know.

Second best are we get some function we don't especially know. In that case, the solution probably involves an integral of f, or two integrals of f. We have a formula for it. That formula includes an integration that we would have to do, either look it up or do it numerically.

And then when we get to completely non-linear functions, or we have varying coefficients, then we're going to go numerically. So really, the wide, wide part of the subject ends up as numerical solutions. But you've got a whole bunch of videos coming that have nice functions and nice solutions.

OK. So that's first order and second order. Now there's more, because a system doesn't usually consist of just a single resistor or a single spring. In reality, we have many equations. And we need to deal with those.

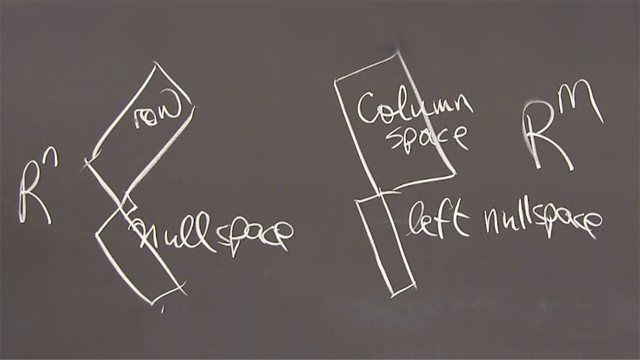

So y is now a vector. y1, y2, to yn. n different unknowns. n different equations. That's n equation. So here that is an n by n matrix. So it's first order. Constant coefficient. So we'll be able to get somewhere. But it's a system of n coupled equations.

And so is this one with a second derivative. Second derivative of the solution. But again, y1 to yn. And we have a matrix, usually a symmetric matrix there, we hope, multiplying y.

So again, linear. Constant coefficients. But several equations at once. And that will bring in the idea of eigenvalues and eigenvectors. Eigenvalues and eigenvectors is a key bit of linear algebra that makes these problems simple, because it turns this coupled problem into n uncoupled problems. n first order equations that we can solve separately. Or n second order equations that we can solve separately. That's the goal with matrices is to uncouple them.

OK. And then really the big reality of this subject is that solutions are found numerically and very efficiently. And there's a lot to learn about that, a lot to learn. And MATLAB is a first-class package that gives you numerical solutions with many options.

One of the options may be the favorite. ODE for ordinary differential equations 4 5. And that is numbers 4, 5. Well, Cleve Moler, who wrote the package MATLAB, is going to create a series of parallel videos explaining the steps toward numerical solution.

Those steps begin with a very simple method. Maybe I'll put the creator's name down. Euler. So you can know that because Euler was centuries ago, he didn't have a computer. But he had a simple way of approximating. So Euler might be ODE 1. And now we've left Euler behind. Euler is fine, but not sufficiently accurate.

ODE 45, that 4 and 5 indicate a much higher accuracy, much more flexibility in that package. So starting with Euler, Cleve Moler will explain several steps that reach a really workhorse package.

So that's a parallel series where you'll see the codes. This will be a chalk and blackboard series, where I'll find solutions in exponential form. And if I can, I would like to conclude the series by reaching partial differential equations.

So I'll just write some partial differential equations here, so you know what they mean. And that's a goal which I hope to reach.

So one partial differential equation would be du dt-- you see partial derivatives-- is second derivative. So I have two variables now. Time, which I always have. And here is x in the space direction. That's called the heat equation. That's a very important constant coefficient, partial differential equation.

So PDE, as distinct from ODE. And so I write down one more. The second derivative of u is the same right-hand side second derivative in the x direction. That would be called the wave equation.

So this is like the first order equation in time. It's like a big system. In fact, it's like an infinite size system of equations. First order in time. Or second order in time. Heat equation. Wave equation.

And I would like to also include a the Laplace equation. Well, if we get there. So those are goals for the end of the series that go beyond some courses in ODEs. But the main goal here is to give you the standard clear picture of the basic differential equations that we can solve and understand.

Well, I hope it goes well. Thanks.