Differential Equations and Linear Algebra, 2.6c: Variations of Parameters

From the series: Differential Equations and Linear Algebra

Gilbert Strang, Massachusetts Institute of Technology (MIT)

Combine null solutions y1 and y2 with coefficients c1(t) and c2(t) to find a particular solution for any f(t).

Published: 27 Jan 2016

OK. So today is a specific way to solve linear differential equations. I'll take second order equations as a good example. This way is called variation of parameters, and it will lead us to a formula for the answer, an integral. So that's the big step, to get from the differential equation to y of t equal a certain integral. That integral will involve the right hand side, of course, the source. And if we can do the integration, then we get a complete answer. But in any case, we get a nice form for the answer. OK.

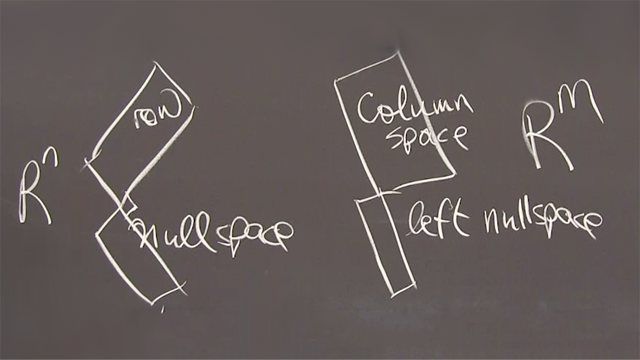

So what's the idea? So we're looking for a particular solution. Today is about a particular solution. We have to know two null solutions to get started. So we must know these, y1 and y2, null solutions, with f equals 0.

And of course we do know two null solutions when those coefficients b and c are constants. And we'll do it as a good example, the most important example. But maybe sometimes we can find the null solutions when B and t are changing in time, time varying, and that's all to the good. Those problems are not easy to solve. But it's really constant coefficients that we know it works.

OK. What's the idea? So the idea is to use y1 and y2, the null solutions. If we multiply by constants, we get another null solution by linearity. But the idea is to multiply them by functions. The varying, those constants are not constant, they are varying parameters.

So this would be the form of the solution. And we want to find a c1 and a c2 depending on t, so that the differential equation above it is solved.

So of course, I'm going to plug that into the differential equation and find out the conditions on c1 and c2. May I just jump to the result? This will satisfy that equation. We know that y1 and y2 satisfy it with a 0 on the right-hand side. So we just have to-- So it's c1 of t and c2 of t that are going to deal with the f.

So here is the result after putting it in there. I discover that c1 prime, the derivative of t, comes into it times y1 plus c2 prime times y2 equaling 0 will give me one equation for c1 prime and c2 prime.

The other equation for c1 prime times-- We'll multiply y1 prime plus c2 prime y2 prime. Of course, prime means the derivative. And that, when I'm plugging all this into the equation, I'm going to see an f of t on the right-hand side.

I have two equations at each time. At each time instant I have two ordinary linear equations. They're straight lines in the c1, c2 plane. They intersect. We know how to solve-- The most basic problem of linear algebra, solve two equations and two unknowns. And we do that for each t, and we get an answer.

So this leads us to c1 and c2 and they depend on t. Because-- Well, actually it leads us to c1 prime and c2 prime, the derivatives. It just happens that when we plug it in, the c1 and the c2 themselves disappear because these were null solutions. So we know that c1 constant would be good, but when we put it in there, we get equations that involve c1 prime at each time. So I'll take that away. At each time.

All right. Two equations, two unknowns, we solved it. OK.

And then when we solve them, we put them back in to y of t and can now just write the answer. I'll just write. So I'm not doing all the gory calculations. I'm just going to write the answer. So y of t is going to be-- Just to be sure, I'm looking here. That's what I'm going to write, and I'm going to part c1 and c2 into that-- c1 and c2 come from these two equations. OK.

So I have a y1 of t times c1. Now, the c1 that comes out of that happens to be-- Well, c1 prime comes out of it, so I have to integrate. I have to integrate c1 prime. So c1 prime that comes out of that is a minus y2 times the f, dt, and then there is a denominator, because if I have two equations and two unknowns, there's a little 2 by 2 determinant. I'll just call it W.

It has a famous name in differential equations. And I'll tell you that name. W of t is the determinant of what I have there, y1, y2, y1 prime, y2 prime. Two equations, they have to be independent. They have to be invertible to give me-- and it's this determinant that is the critical thing. So that's y1, y2 prime minus y2, y1 prime. That's the function.

Remember we know y1 and y2. The whole deal is starting with y1 and y2, null solutions, and combining them. And you see there is a first. This is the c1 and now I have to add in the c2. It looks a little messy, but it's just an integral. It's just an integral.

So now what is y2 of t multiplied by? Well, it's multiplied by c2, and since this equation gives me c2 prime, I'm going to have to integrate to get c2. And it turns out to be y1 of t, f of t, dt, divided by that same determinant W of t.

This is-- Let me tell you its name. It's named after a guy named Wronski, so it's called the Wronskian. You can call it that or not. It's this.

So we know y1 and y2. That means we've plugged those in, we find the Wronskian. We put that in these integrals. We have the y2s and the y1s and we're given the f and we integrate. Well, if we can integrate. I don't plan to go probably much beyond that. Just I'll stop there. That's a formula for the answer.

Well, I won't completely stop there. Let me do an example. Let me do an example. So let's see. I guess my example will be a constant coefficient equation.

So constant coefficient when-- What are the null solutions for a constant coefficient equation? You remember what happens with constant coefficients. You plug in. You get s squared plus Bs plus C times-- You're looking for-- you're trying for an exponential, and it'll work.

So I plugged-- This is looking for null solutions, because we have to have those to start. They are the y1 and y2 in my formula. So my variation and parameters, the whole new thing, is completed there. Just if I want an example, I have to solve the differential equation, and for that, believe me, I'm going to make B and C constant.

So I solve this equation. So this gives me-- Of course, I can cancel the e to the st, because it's never 0. So this has to be 0. And that has two roots, and the quadratic formula tells me those two roots s1 and s2. And then my null solutions are y1 is e to s1t and y2 is e to the s2t.

Now, I'm ready to go. I'm going to put those into this formula. I have to do the Wronskian, and I need another blackboard. Sorry. This is the only video so far that went to a third board.

Can you remember I need the Wronskian and then I'll reproduce the-- So there's-- Let me copy again.

Y1 is e to the s1t y2 is e to the s2t. And now that Wronskian is y1, y2 prime minus y2, y1 prime. And what does that come out to be?

y1 is e to the s1t. y2 prime is the derivative of that, so it's an s2, e to the s2t. That's this term gave me that. And now I subtract y2, which is there. y1 prime, which is the derivative of this, brings down an s1. You see the beauty of all these formulas. Well, beautiful if you like, formulas, not everybody does. OK.

So now I know all the terms, and I'm prepared to write again my formula for y of t, my solution. This is the climax now. I'm going to use the formula that came from variation of parameters. And I'm going to put in that function, that function, and that W, and I'm going to see what I have. OK.

So do you remember it was y1 times an integral. Oh, I had better use a variable of integration here. I don't want to put t in there, because t is the limit of integration. So y1, do you remember, it was a minus y2 e to s2-- I'll use just capital T-- time divided by W.

Remember, there's an f of t and there's a dT, and I'm using a capital T as the dummy variable. And in the bottom goes W, which is s2 minus s1 e to the s1T, e to the s2T.

That was the first term. This was the c1 that multiplied y1. Now, I have to remember the y2, the second null solution, times its c2. And that was the integral.

And again, now I have a plus sign, I think, so it was-- put in parentheses there. That was the W on the bottom.

So it was e to the s1T, f of T, dT, dT, and that same W down here, s2 minus s1, e to s1T, e to the s2T. OK.

That's the variation of parameters formula for these nice null solutions. It doesn't get better than this. And in fact, I guess I can cancel e to the s2T there. I can cancel the e to the s1T there. I can put this up with a minus exponent.

Oh yeah, it's going to be good. It's going to be good.

So here is a constant s2 minus s1, the same for both terms. Then I'll put this up here as a negative exponent, so there's an e to the s1t, I'll get-- You might even see this coming. I get this 1 over s2 minus s1, and then I have the integral.

And here I have an f of T in both integrals. Let me just put that f of T down. And I have a dT. And then what do I-- What else do I have? I have this thing which I can put inside but with a small t. I'm not integrating that. I think if you look there, you have e to the s1t, and then this will come up with a minus sign. Does that look good to you for the first term?

And then the second term will have that same 1 over s2 minus s1. Now, what do I have? e to the s2T is going to come up with a minus sign, so I have an integral of e to the s2, t minus T. I think there's a minus. Haha. Don't forget that minus, Professor Strang. And f of T, dT.

Now, right there I'm going to put the answer. I'm going to put the answer, and for me, this was the highlight of the whole thing. I didn't know what would come out of variation of parameters. I wasn't-- I'm not at all an expert in that. But I just followed the rules, put in these two null solutions, computed W, put it all in, ended up with this answer, and then I was very happy to recognize what this answer was. This answer is the integral of something times-- This is the integral from 0 to t of something times f of T, dT. And what is that something? It comes from that term divided by this with a minus sign, and this term divided by that with a minus sign, and when you put those in, what do you get but the impulse response. What I've called g of t. And it's that t minus T.

So here you go. This is the big moment.

g of t minus capital T, f of T, dT. Focus camera and attention on that last result. So that's the formula that we end up with from the variation of parameters applied to the constant coefficient problem. It's given us what we already knew. It's told us that the solution, the particular solution, y of t is the integral of the inputs, the right-hand side, the forcing term, f of T, times the growth term, the impulse response.

We could imagine that at every time T, we have an impulse of size f of T. And that impulse grows by the impulse response, g, over the remaining time until it gets us to here.

Let me just clean that up.

And then we have to take all those inputs, so we integrate over all those inputs, and we get that answer. That's the ultimate formula for the solution to our differential equations, to our linear constant coefficient differential equation. So we've reproduced that formula in the one case, constant coefficient case, when we can find the null solutions and run this variation of parameters formula right through to the end, and that's the end.

Thank you.