Implement Point Cloud SLAM in MATLAB

A point cloud is a set of points in 3-D space. Point clouds are typically obtained from 3-D scanners, such as a lidar or Kinect® device. They have applications in robot navigation and perception, depth estimation, stereo vision, visual registration, and advanced driver assistance systems (ADAS).

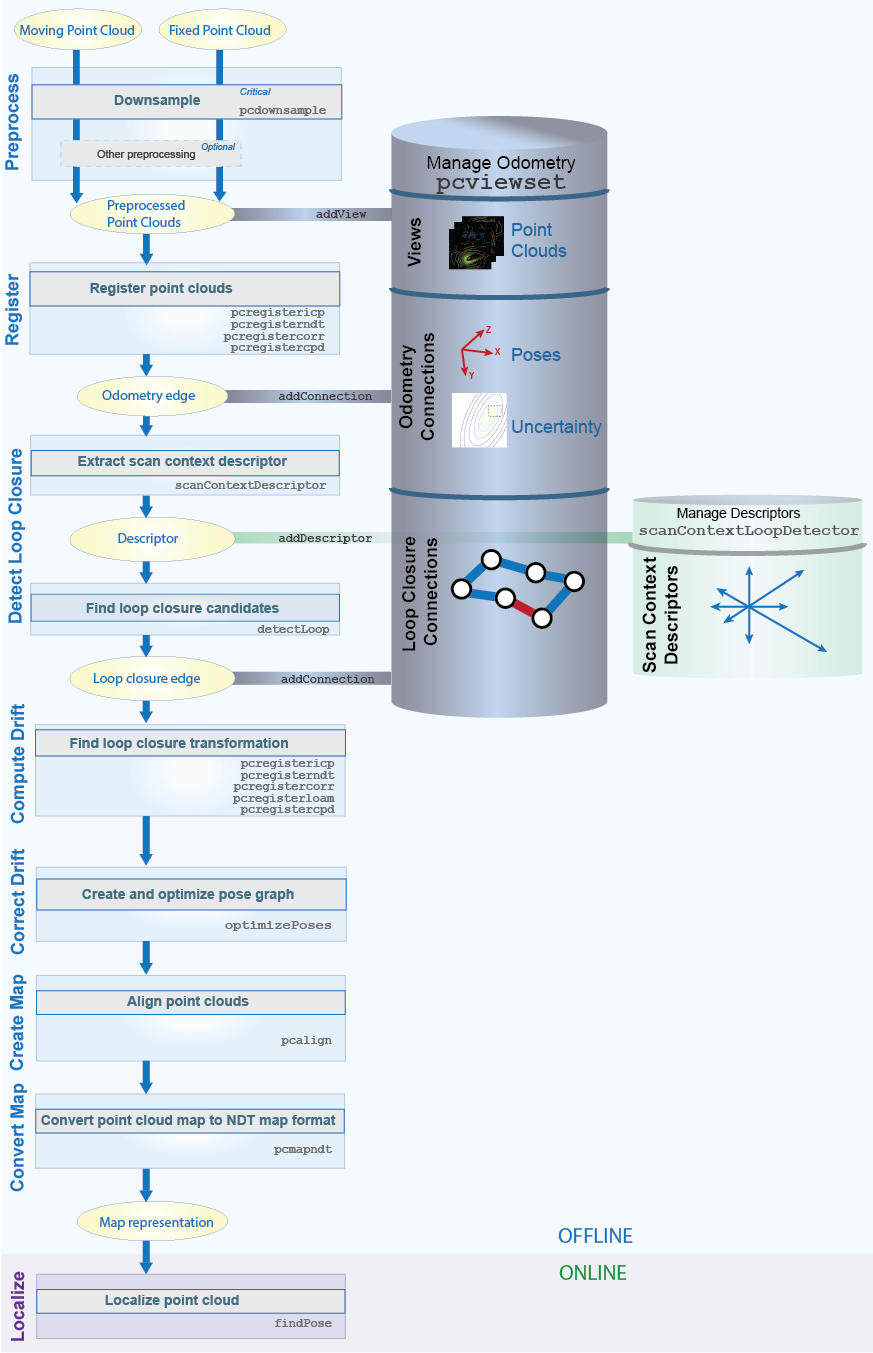

Point cloud registration is the process of aligning two or more 3-D point clouds of the same scene into a common coordinate system. Mapping is the process of building a map of the environment around a robot or a sensor. You can use registration and mapping to reconstruct a 3-D scene or build a map of a roadway for localization. While registration commonly precedes mapping, there are other applications for registration, that may not require mapping, such as deformable motion tracking. Computer Vision Toolbox™ algorithms provide functions for performing point cloud registration and mapping. The workflow consists of preprocessing, registration, drift correction, and alignment of point clouds.

Simultaneous localization and mapping (SLAM) refers to the process of calculating the position and orientation of a vehicle, with respect to its surroundings, while simultaneously mapping the environment. The process uses only point cloud inputs from a sensor. Applications for point cloud SLAM include robotics and autonomous driving. For a general description on why SLAM matters and how it works for different applications, see What is SLAM?

Mapping and Localization Workflow

Follow these steps to perform point cloud registration and mapping on a sequence of point clouds. Then you can localize the vehicle in the prebuilt map.

Preprocess Point Clouds — To prepare the point clouds for registration, downsample them and remove unwanted features and noise.

Register Point Clouds — Register each point cloud against the one preceding it. These registrations are used in odometry, which is the process of accumulating a registration estimate over successive frames. Using odometry alone can lead to drift between the measured and ground truth poses.

Detect Loops — Perform loop closure detection to minimize drift. Loop closure detection is the process of identifying the return of the sensor to a previously visited location, which forms a loop in the trajectory of the sensor.

Correct Drift — Use the detected loops to minimize drift through pose graph optimization, which consists of incrementally building a pose graph by adding nodes and edges, and then optimizing the pose graph once you have found sufficient loops. Pose graph optimization results in a set of optimized absolute poses.

Assemble Map — Assemble a point cloud map by aligning the registered point clouds using their optimized absolute poses. You can use such a prebuilt point cloud map for Localization, which is the process of locating the vehicle within the map.

Localize — Find the pose of the vehicle based on the assembled map.

Manage Data for Mapping and Localization

Use these objects to manage data associated with the point cloud registration and mapping workflow:

pointCloudobject — The point cloud object stores a set of points located in 3-D space. It uses efficient indexing strategies to accomplish nearest neighbor searches, which are leveraged by point cloud preprocessing and registration functions.rigidtform3dobject — The rigid 3-D object stores a 3-D rigid geometric transformation. In this workflow, it represents the relative and absolute poses.pcviewsetobject — The point cloud view set object manages the data associated with the odometry and mapping process. It organizes data as a set of views and pairwise connections between views. It also builds and updates a pose graph.Each view consists of a point cloud and the associated absolute pose transformation. Each view has a unique identifier within the view set and forms a node of the pose graph.

Each connection stores information that links one view to another view. This includes the relative transformation between the connected views and the uncertainty involved in computing the measurement. Each connection forms an edge in the pose graph.

scanContextLoopDetectorobject — The loop closure detection object stores scan context descriptors with a corresponding view ID. The ID associates a detected loop closure to a view inpcviewset.pcmapndtobject — The NDT map object stores a compressed, memory-efficient map representation for localization. The object converts the point cloud map into a set of voxels (3-D boxes), each voxel represented by a 3-D normal distribution.

Preprocess Point Clouds

Preprocessing includes removing unwanted features and noise from the point clouds, and segmenting or downsampling them. Preprocessing can include these functions:

pcdownsample— Downsample the point cloud.pcsegdistorsegmentLidarData— Segment the point cloud data into clusters, then use theselectfunction to select the desired points.pcfitplane,segmentGroundFromLidarData, orsegmentGroundSMRF(Lidar Toolbox) — Segment the ground plane, then use theselectfunction to select the desired points.pcdenoise— Remove unwanted noise from the point cloud.

Register Point Clouds

You can use the pcregisterndt, pcregistericp, pcregistercorr, pcregisterloam (Lidar Toolbox), or pcregistercpd function to register a moving point cloud to a fixed point

cloud. The registration algorithms used by these functions are based on the

normal-distributions transform (NDT) algorithm, the iterative closest point (ICP)

algorithm, a phase correlation algorithm, the lidar odometry and mapping (LOAM)

algorithm, and the coherent point drift (CPD) algorithm, respectively. For more

information on these algorithms, see References.

When registering a point cloud, choose the type of transformation that represents how objects in the scene change between the fixed and moving point clouds.

| Transformation | Description |

|---|---|

| Rigid | The rigid transformation preserves the shape and size of objects in the scene. Objects in the scene can undergo translations, rotations, or both. The same transformation applies to all points. |

| Affine | The affine transformation allows the objects to shear and change scale in addition to undergoing translations and rotations. |

| Nonrigid | The nonrigid transformation allows the shape of objects in the scene to change. Points undergo distinct transformations. A displacement field represents the transformation. |

This table compares the point cloud registration function options, their transformation types, and their performance characteristics. Use this table to help you select the appropriate registration function for your use case.

| Registration Method (function) | Transformation Type | Description | Performance Characteristics |

|---|---|---|---|

pcregisterndt | Rigid |

| Provide an initial estimate to enable the algorithm to converge faster. |

pcregistericp | Rigid |

| |

pcregistercorr | Rigid |

| Decrease the size of the occupancy grids to decrease the computational requirements of the function. |

pcregisterloam (Lidar Toolbox) | Rigid |

| Provides increased control between the processing speed versus registration accuracy Use the |

pcregisterfgr (Lidar Toolbox) | Rigid |

|

|

pcregistercpd | Rigid, affine, and nonrigid |

| Slowest registration method. Not recommended for map building. |

Registering the current (moving) point cloud against the previous

(fixed) point cloud returns a rigidtform3d transformation that represents the estimated relative pose of

the moving point cloud in the frame of the fixed point cloud. Composing this relative

pose transformation with all previously accumulated relative pose transformations gives

an estimate of the absolute pose transformation.

Add the view formed by the moving point cloud and its absolute pose transformation to

the view set. You can add the view to the pcviewset

object using the addView

function.

Add the odometry edge, an edge defined by the connection

between successive views, formed by the relative pose transformation between the fixed

and moving point clouds to the pcviewset

object using the addConnection function.

Tips for Registration

Local registration methods, such as those that use NDT or ICP (

pcregisterndtorpcregistericp, respectively), require initial estimates for better performance. To obtain an initial estimate, use another sensor such as an inertial measurement unit (IMU) or other forms of odometry.For increased accuracy in registration results, increase the value for the

'MaxIterations'argument or decrease the value for the'Tolerance'argument. Changing these values in this way consequently slows registration speed.Consider downsampling point clouds using

pcdownsample, before usingpcregisterndt,pcregistericp, orpcregistercpd, to improve the efficiency and accuracy of registration.Denoising using

pcdenoisebefore registration can improve registration accuracy, but it can slow down the execution time of the map building workflow.

Detect Loops

Using odometry alone leads to drift due to accumulation of errors. These errors can result in severe inaccuracies over long distances. Using graph-based simultaneous localization and mapping (SLAM) corrects the drift. To do this, detect loop closures by finding a location visited in a previous point cloud using descriptor matching. Use loop closures to correct for accumulated drift. Follow these steps to detect loop closures:

Use the

scanContextDescriptorfunction to extract scan context descriptors, which capture the distinctiveness of a point cloud.Add the scan context descriptors to the

scanContextLoopDetectorusingaddDescriptor.Use the

detectLoopfunction to find potential loop closures.Register the point clouds to determine the relative pose transformation between the views and the root mean square error (RMSE) of the Euclidean distance between the aligned point clouds. Use the RMSE to filter invalid loop closures. The relative pose transformation represents a connection between the two views. An edge formed by a connection between nonsuccessive views is called a loop closure edge. You can add the connection to the

pcviewsetobject using theaddConnectionfunction.

For an alternative approach to loop closure detection based on segment matching, refer

to the findPose (Lidar Toolbox)

function.

Correct Drift

The pcviewset

object internally updates the pose graph as views and connections are added. To minimize

drift, perform pose graph optimization by using the optimizePoses function, once sufficient loop closures are detected. The

optimizePoses function returns a pcviewset

object with the optimized absolute pose transformations for each view.

You can use the createPoseGraph function to return the pose graph as a MATLAB®

digraph object. You can use graph algorithms in MATLAB to inspect, view, or modify the pose graph. Use the optimizePoseGraph (Navigation Toolbox) function to optimize the modified pose graph, and then

use the updateView function to update the poses in the view set.

Assemble Map

Use the pcalign

function to build a point cloud map using the point clouds from the view set and their

optimized absolute pose transformations. This point cloud map can now be used for online

localization using the NDT localization algorithm.

Localize Vehicle in Map

Convert the prebuilt point cloud map to the NDT map format using the pcmapndt

object. The pcmapndt

object stores the map in a compressed voxel representation that can be saved to disk and

used for online localization. Use the findPose

function to localize in the map.

Alternate Workflows

Alternative workflows for map building and localization are available in Computer Vision Toolbox, Navigation Toolbox™, and Lidar Toolbox™.

Visual SLAM using Computer Vision Toolbox features — Calculate the position and orientation of a camera with respect to its surroundings, while simultaneously mapping the environment. For more details, see Implement Visual SLAM in MATLAB.

Build an occupancy map using Navigation Toolbox features — Build an occupancy map from point clouds. For details, see Perform SLAM Using 3-D Lidar Point Clouds (Navigation Toolbox).

Segment matching using Lidar Toolbox features — Build a map representation of segments and features using the

pcmapsegmatch(Lidar Toolbox) object. Use thefindPose(Lidar Toolbox) function for loop closure detection and localization. This approach is robust to dynamic obstacles and is recommended for large scale environments. For an example of this approach, see the Build Map and Localize Using Segment Matching (Lidar Toolbox) example. The table highlights the similarities and differences between thepcmapndtandpcmapsegmatch(Lidar Toolbox) map representations.Workflow pcmapndtpcmapsegmatchAlgorithm Normal distributions transform (NDT) SegMatch — segment matching approach Mapping Build the map first — Incrementally build the map using pcviewset. Then, usepcalignto assemble the map and convert the prebuilt map to an NDT map representation.Build the map incrementally using pcmapsegmatch(Lidar Toolbox) — Add views topcviewset(usingaddView) and topcmapsegmatch(Lidar Toolbox) (usingaddView(Lidar Toolbox)) for each point cloud scan. Detect loop closures usingfindPose(Lidar Toolbox) and correct for accumulated drift withoptimizePoses.Localization Similarity Select a submap for localization, and then find the pose for localization using one set of the following options:

The

selectSubmapandfindPosefunctions of thepcmapndtobject.The

selectSubmap(Lidar Toolbox) andfindPose(Lidar Toolbox) functions of thepcmapsegmatch(Lidar Toolbox) object.

Localization Difference Relies on a pose estimate. Does not rely on a pose estimate. Visualization Visualize the map or selected submap using the showfunction of thepcmapndtobject or theshow(Lidar Toolbox) function of thepcmapsegmatch(Lidar Toolbox) object.

References

[1] Myronenko, Andriy, and Xubo Song. “Point Set Registration: Coherent Point Drift.” IEEE Transactions on Pattern Analysis and Machine Intelligence 32, no. 12 (December 2010): 2262–75. https://doi.org/10.1109/TPAMI.2010.46

[2] Chen, Yang, and Gérard Medioni. “Object Modelling by Registration of Multiple Range Images.” Image and Vision Computing 10, no. 3 (April 1992): 145–55. https://doi.org/10.1016/0262-8856(92)90066-C.

[3] Besl, P.J., and Neil D. McKay. “A Method for Registration of 3-D Shapes.” IEEE Transactions on Pattern Analysis and Machine Intelligence 14, no. 2 (February 1992): 239–56. https://doi.org/10.1109/34.121791.

[4] Biber, P., and W. Strasser. “The Normal Distributions Transform: A New Approach to Laser Scan Matching.” In Proceedings 2003 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2003) (Cat. No.03CH37453), 3:2743–48. Las Vegas, Nevada, USA: IEEE, 2003. https://doi.org/10.1109/IROS.2003.1249285.

[5] Magnusson, Martin. “The Three-Dimensional Normal-Distributions Transform: An Efficient Representation for Registration, Surface Analysis, and Loop Detection.” PhD thesis, Örebro universitet, 2009.

[6] Dimitrievski, Martin, David Van Hamme, Peter Veelaert, and Wilfried Philips. “Robust Matching of Occupancy Maps for Odometry in Autonomous Vehicles.” In Proceedings of the 11th Joint Conference on Computer Vision, Imaging and Computer Graphics Theory and Applications, 626–33. Rome, Italy: SCITEPRESS - Science and Technology Publications, 2016. https://doi.org/10.5220/0005719006260633.

[7] Zhang, Ji, and Sanjiv Singh. “LOAM: Lidar Odometry and Mapping in Real-Time.” In Robotics: Science and Systems X. Robotics: Science and Systems Foundation, 2014. https://doi.org/10.15607/RSS.2014.X.007.

See Also

Functions

pcregistercorr|pcregisterndt|pcregistericp|pcregistercpd|pcalign|scanContextDistance|scanContextDescriptor|findPose|show|segmentGroundSMRF(Lidar Toolbox)

Objects

Topics

- What is SLAM?

- What Are Organized and Unorganized Point Clouds? (Lidar Toolbox)

- Build a Map from Lidar Data Using SLAM

- Implement Visual SLAM in MATLAB

- Design Lidar SLAM Algorithm Using Unreal Engine Simulation Environment (Automated Driving Toolbox)

- 3-D Point Cloud Registration and Stitching

- Build a Map from Lidar Data

- Build Map and Localize Using Segment Matching (Lidar Toolbox)